Alibaba Cloud Container Service for Kubernetes (ACK) Setup

Deploy to ACK using the Kubernetes provider for Spinnaker.

Spinnaker’s Kubernetes provider fully supports Kubernetes-native, manifest-based deployments and is the recommended provider for deploying to Kubernetes with Spinnaker.

A Spinnaker Account maps to a credential that can authenticate against your Kubernetes Cluster.

The Kubernetes provider has two requirements:

A kubeconfig file

The kubeconfig file allows Spinnaker to authenticate against your cluster

and to have read/write access to any resources you expect it to manage. You

can think of it as private key file to let Spinnaker connect to your cluster.

You can request this from your Kubernetes cluster administrator.

kubectl CLI tool

Spinnaker relies on kubectl to manage all API access. It’s installed

along with Spinnaker.

Spinnaker also relies on kubectl to access your Kubernetes cluster; only

kubectl fully supports many aspects of the Kubernetes API, such as 3-way

merges on kubectl apply, and API discovery. Though this creates a

dependency on a binary, the good news is that any authentication method or

API resource that kubectl supports is also supported by Spinnaker. This

is an improvement over the original Kubernetes provider in Spinnaker.

If you want, you can associate Spinnaker with a Kubernetes Service Account , even when managing multiple Kubernetes clusters. This can be useful if you need to grant Spinnaker certain roles in the cluster later on, or you typically depend on an authentication mechanism that doesn’t work in all environments.

Given that you want to create a Service Account in existing context $CONTEXT,

the following commands will create spinnaker-service-account, and add its

token under a new user called ${CONTEXT}-token-user in context $CONTEXT.

CONTEXT=$(kubectl config current-context)

# This service account uses the ClusterAdmin role -- this is not necessary,

# more restrictive roles can by applied.

kubectl apply --context $CONTEXT \

-f /downloads/kubernetes/service-account.yml

TOKEN=$(kubectl get secret --context $CONTEXT \

$(kubectl get serviceaccount spinnaker-service-account \

--context $CONTEXT \

-n spinnaker \

-o jsonpath='{.secrets[0].name}') \

-n spinnaker \

-o jsonpath='{.data.token}' | base64 --decode)

kubectl config set-credentials ${CONTEXT}-token-user --token $TOKEN

kubectl config set-context $CONTEXT --user ${CONTEXT}-token-user

If your Kubernetes cluster supports RBAC and you want to restrict permissions granted to your Spinnaker account, you will need to follow the below instructions.

The following YAML creates the correct ClusterRole, ClusterRoleBinding, and

ServiceAccount. If you limit Spinnaker to operating on an explicit list of

namespaces (using the namespaces option), you need to use Role and

RoleBinding instead of ClusterRole and ClusterRoleBinding, and apply the

Role and RoleBinding to each namespace Spinnaker manages. You can read

about the difference between ClusterRole and Role

in the Kubernetes docs

.

If you’re using RBAC to restrict the Spinnaker service account to a particular namespace,

you must specify that namespace when you add the account to Spinnaker.

If you don’t specify any namespaces, then Spinnaker will attempt to list all namespaces,

which requires a cluster-wide role.

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: spinnaker-role

rules:

- apiGroups: ['']

resources:

[

'namespaces',

'configmaps',

'events',

'replicationcontrollers',

'serviceaccounts',

'pods/log',

]

verbs: ['get', 'list']

- apiGroups: ['']

resources: ['pods', 'services', 'secrets']

verbs:

[

'create',

'delete',

'deletecollection',

'get',

'list',

'patch',

'update',

'watch',

]

- apiGroups: ['autoscaling']

resources: ['horizontalpodautoscalers']

verbs: ['list', 'get']

- apiGroups: ['apps']

resources: ['controllerrevisions']

verbs: ['list']

- apiGroups: ['extensions', 'apps']

resources: ['daemonsets', 'deployments', 'deployments/scale', 'ingresses', 'replicasets', 'statefulsets']

verbs:

[

'create',

'delete',

'deletecollection',

'get',

'list',

'patch',

'update',

'watch',

]

# These permissions are necessary for halyard to operate. We use this role also to deploy Spinnaker itself.

- apiGroups: ['']

resources: ['services/proxy', 'pods/portforward']

verbs:

[

'create',

'delete',

'deletecollection',

'get',

'list',

'patch',

'update',

'watch',

]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: spinnaker-role-binding

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: spinnaker-role

subjects:

- namespace: spinnaker

kind: ServiceAccount

name: spinnaker-service-account

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: spinnaker-service-account

namespace: spinnaker

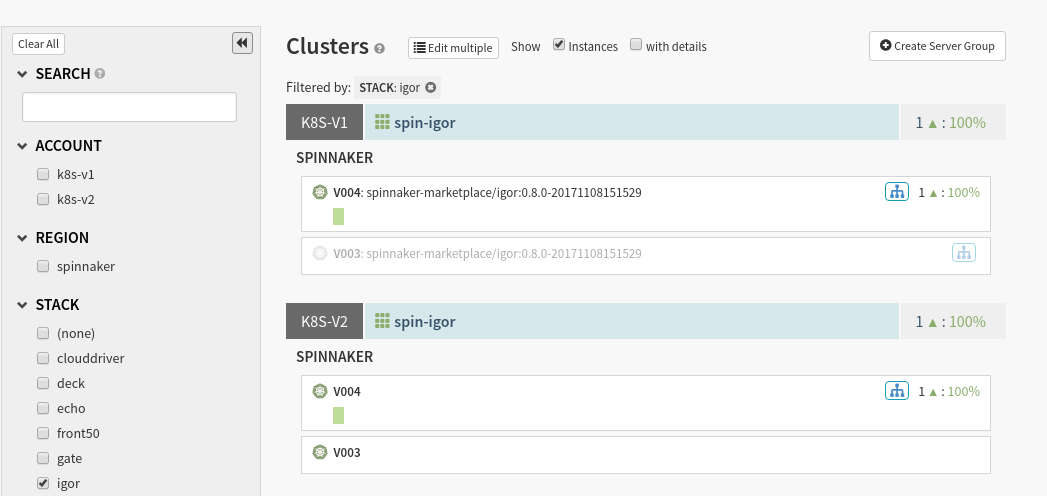

Prior to the deprecation of Spinnaker’s legacy (V1) Kubernetes provider, the standard provider was often referred to as the V2 provider. For clarity, this section refers to the providers as the V1 and V2 providers.

There is no automatic pipeline migration from the V1 provider to V2, for a few reasons:

Unlike the V1 provider, the V2 provider encourages you to store your Kubernetes Manifests outside of Spinnaker in some versioned, backing storage, such as Git or GCS.

The V2 provider encourages you to leverage the Kubernetes native deployment orchestration (e.g. Deployments ) instead of the Spinnaker blue/green (red/black), where possible.

The initial operations available on Kubernetes manifests (e.g. scale, pause rollout, delete) in the V2 provider don’t map nicely to the operations in the V1 provider unless you contort Spinnaker abstractions to match those of Kubernetes. To avoid building dense and brittle mappings between Spinnaker’s logical resources and Kubernetes’s infrastructure resources, we chose to adopt the Kubernetes resources and operations more natively.

The V2 provider does not use the Docker Registry Provider .

You may still need Docker Registry accounts to trigger pipelines, but otherwise we encourage you to stop using Docker Registry accounts in Spinnaker. The V2 provider requires that you manage your private registry configuration and authentication yourself.

However, you can easily migrate your infrastructure into the V2 provider. For any V1 account you have running, you can add a V2 account following the steps below . This will surface your infrastructure twice (once per account) helping your pipeline & operation migration.

A V1 and V2 provider surfacing the same infrastructure

First, make sure that the provider is enabled:

hal config provider kubernetes enable

Then add the account:

CONTEXT=$(kubectl config current-context)

hal config provider kubernetes account add my-k8s-account \

--context $CONTEXT

Finally, enable artifact support .

If you’re looking for more configurability, please see the other options listed in the Halyard Reference .

Deploy to ACK using the Kubernetes provider for Spinnaker.

Set up Spinnaker on AWS EKS using the Kubernetes-V2 provider

Deploy to GKE using the Kubernetes provider for Spinnaker.

Spinnaker’s Kubernetes provider fully supports Kubernetes-native, manifest-based deployments and is the recommended provider for deploying to Kubernetes with Spinnaker.

Deploy to OKE using the Kubernetes provider for Spinnaker.